The best engineers I know are spending their days doing something they're actually terrible at.

They're not bad at writing code — they're incredible at it. But they've stopped doing the thing they're best at, and nobody told them. They just... drifted into it. I did the same thing for way too long at work — using Claude Code heavily on a large-scale repo migration — and I didn't even notice until I stepped back and looked at how I was actually spending my time.

The preparation IS the work now. That's it.

Not writing the code. Not debugging the code. Not even reviewing. The thing that determines whether Claude one-shots your ticket or hallucinates straight AI garbage — is how well you set up the problem before the AI writes a single line.

If you prepare well, Claude will understand it and probably nail it first try. If you don't, you'll spend three hours fighting an AI that's over-confidently building the wrong thing. And that's three hours of your best engineering skill — your ability to think through problems — completely wasted on cleanup.

So What Did We Actually Become?

Here's what I realized halfway through our migration: we're not writing code anymore — we're curating context.

It's basically air traffic control, right? The controller doesn't fly the planes — but every plane in the sky depends on them to land safely. They're sequencing — which plane goes first, which one holds, which runway is clear. They have a constrained space (the airspace) and if they overload it, things collide. The skill isn't in the flying. It's in the coordination.

That's what we're doing now. The "flying" — the implementation — Claude handles that. But you're the one deciding what context to load, what files to point it at, what order to tackle things in, and what constraints to set. You're managing a constrained space and sequencing work so nothing collides. And if your sequencing is wrong? The whole thing falls apart — doesn't matter how capable the aircraft are.

You're not paid to write every line of code anymore. You're paid to know which 5% of context actually matters right now.

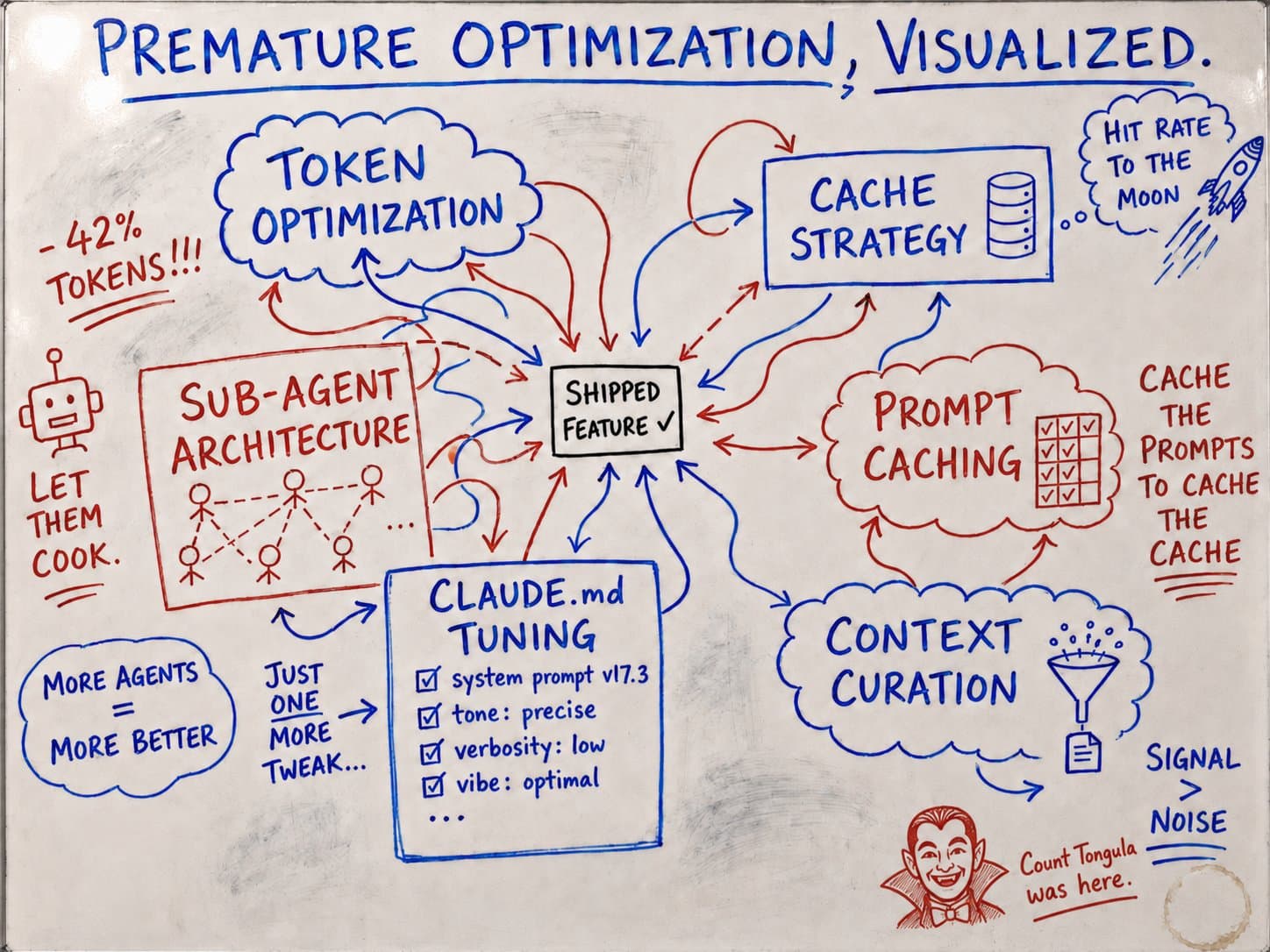

Here's the thing about AI coding tool best practices — they basically all boil down to one constraint: when you ask an AI to solve something complex, it needs to understand the whole picture. Give it fragments and it guesses. Give it noise and it drowns. Fill the context window wrong and Claude doesn't warn you — it just confidently builds the wrong thing. And you'll spend three hours debugging something that should have taken fifteen minutes.

So yeah, every technique I'm about to share? Managing that constraint. You're not writing prompts anymore — you're deciding what the AI actually needs to see.

Here's Where Most People Go Wrong

Everyone wants to give Claude the whole project at once. They all do. Like — "here's my codebase, migrate the thing, figure it out." Claude will hallucinate you into a brick wall.

Claude gets confused when tasks aren't EXTREMELY scoped. And I mean extremely. This is what kills you — hallucinated code that looks right but does absolutely nothing.

One task per prompt. One major change per PR. Build the skeleton first. One bone at a time. Sounds slow. It's way way faster — because each one lands clean.

This matters beyond just code quality too — PR reviewability, QA testing, debugging. When something breaks, you know exactly which change caused it. Smaller, targeted context = better results. The less noise the AI has to parse, the more accurate its output.

But here's the part nobody talks about: before you systematize anything, do one full task end-to-end manually. Just one. Start to finish. No shortcuts.

That first rep is where you learn everything. Where does Claude struggle? What context does it actually need? Where does it make assumptions? What does the "happy path" look like when it goes right?

For our repo migration, the first task we migrated was messy. Took way longer than it should have. But it taught us exactly what Claude needed to succeed — which files to reference, what order to build dependencies in, what instructions to put in our repo's CLAUDE.md. Every task after that was dramatically faster because we'd done the spike first. Once you've done one successfully, that becomes your reference pattern — and example-driven prompts produce way more consistent results than spec-driven ones. Every. Single. Time.

And here's a subtle one that bites people constantly — tell the AI which layer something belongs in. Explicitly. If your architecture has distinct layers, Claude needs to know which one it's working in, or it'll write duplicate logic across layers. "Should the system check if a user is authenticated in layer A itself, or layer B?" If you don't specify, Claude will guess. And it will guess wrong.

Prep vs no prep